The Hierarchical Logic of Binary Search Trees

The efficiency of data retrieval often dictates the success of a software system, and at the heart of efficient retrieval lies the binary search tree . This data structure serves as a fundamental...

The efficiency of data retrieval often dictates the success of a software system, and at the heart of efficient retrieval lies the binary search tree. This data structure serves as a fundamental building block in computer science, reconciling the tension between the fast access of a sorted array and the dynamic flexibility of a linked list. While a simple array allows for binary search in logarithmic time, it suffers from linear time complexity during insertions and deletions. Conversely, a linked list allows for rapid structural changes but forces a linear search to find specific elements. The binary search tree emerges as a elegant hybrid, offering a hierarchical approach that maintains sorted order while supporting dynamic modifications with remarkable speed.

Foundations of Tree-Based Hierarchies

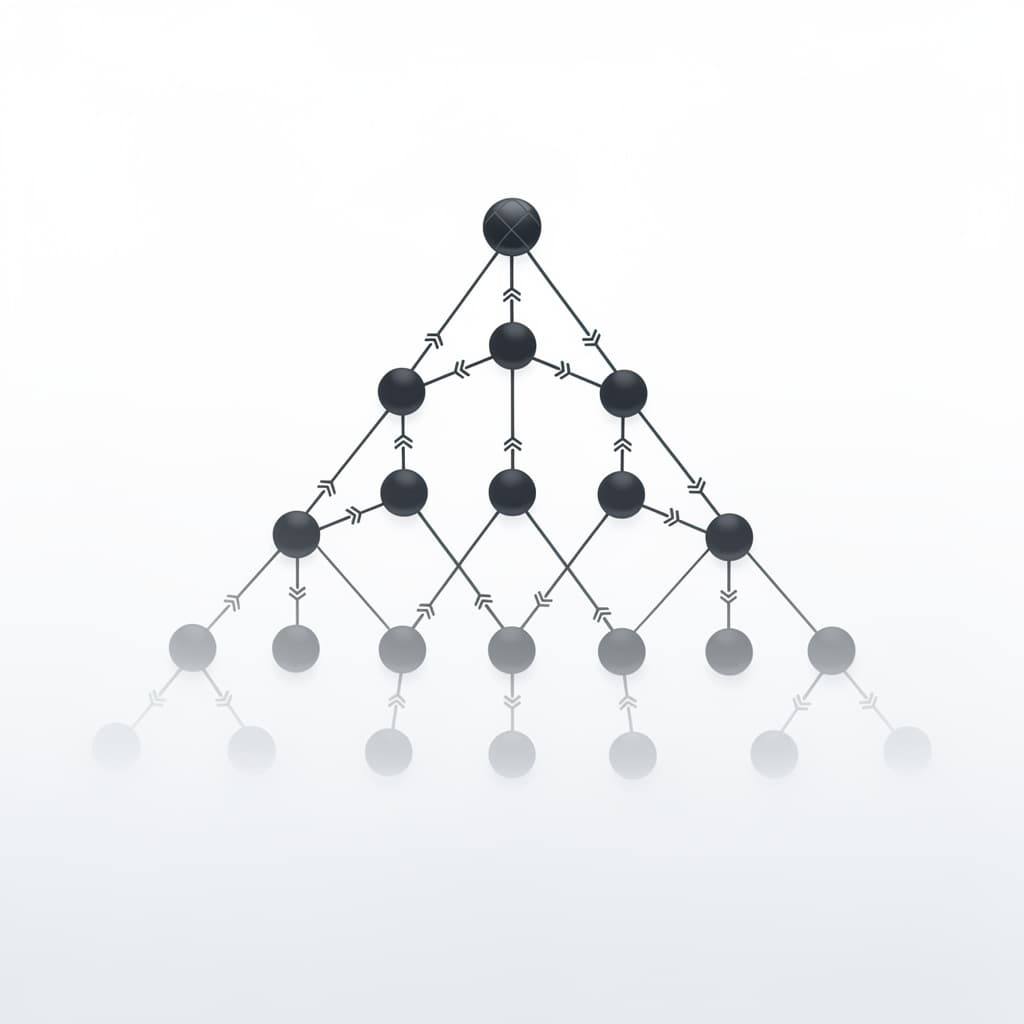

To understand the binary search tree, one must first grasp the broader concept of a binary tree. A binary tree is a non-linear data structure where each element, referred to as a node, contains a data value and references to at most two other nodes, commonly called the left child and the right child. This branching structure creates a parent-child relationship that can be navigated recursively. In a standard binary tree, there is no specific requirement regarding the relative values of these nodes; they are simply organized in a branching fashion. This lack of order makes a generic binary tree inefficient for searching, as one might still need to visit every node to find a specific target, resulting in a time complexity of $O(n)$.

The distinction between a bst vs binary tree lies in the rigorous ordering constraint imposed on the former. In a binary search tree, for any given node with a value $k$, every node in its left subtree must have a value less than $k$, and every node in its right subtree must have a value greater than $k$. This property must hold true for every single node within the structure, not just the root. This "BST invariant" is what transforms a simple hierarchy into a powerful search engine. By enforcing this rule, the structure effectively mimics the logic of a binary search algorithm, where each step at a node allows the system to discard roughly half of the remaining search space.

Every tree begins with a single point of entry known as the root node. If the tree is empty, the root is null; otherwise, it serves as the ultimate ancestor of every other node in the hierarchy. Nodes that do not have any children are referred to as leaf nodes, representing the termination points of various paths through the tree. The depth of a node is the number of edges from the root to that node, while the height of the tree is the length of the longest path from the root to a leaf. Understanding these structural properties is vital because the efficiency of all binary search tree operations is directly tied to the height of the tree, which we aim to keep as small as possible relative to the number of nodes.

The Core Mechanics of Binary Search Tree Operations

The process of adding data to a binary search tree is governed by recursive logic that ensures the BST invariant remains intact. When a new value is introduced, the algorithm begins at the root and compares the new value to the current node's value. If the new value is smaller, the algorithm moves to the left child; if it is larger, it moves to the right. This process continues down the tree until a null reference is reached, at which point the new node is attached. This pathfinding approach ensures that the tree grows in a way that preserves the sorted relationship between all elements, making the insertion process both logical and efficient.

Searching for a value within a binary search tree follows a nearly identical path to insertion. At each node, the search algorithm makes a three-way decision: is the target value equal to the current node, smaller than it, or larger? If it is equal, the search is successful and the node is returned. If it is smaller, the search proceeds exclusively into the left subtree, completely ignoring the right side. This ability to prune the search space at every step is why the BST is so highly regarded. In a well-balanced tree containing one million elements, a search would require only about 20 comparisons, whereas a linear list would require an average of 500,000 comparisons.

Maintaining the BST invariant is the responsibility of every operation that modifies the tree's structure. While searching is a passive read operation, insertion and deletion are active writes that must be handled with care. If an insertion were to ignore the ordering rules, the tree would lose its search utility, reverting to a standard binary tree where the "search" would become a brute-force traversal. Therefore, developers often implement these operations using recursion because the tree structure is inherently recursive. A binary search tree is composed of a root node and two subtrees, both of which are also, by definition, binary search trees.

Principles of Binary Search Tree Traversal

Binary search tree traversal refers to the process of visiting every node in the tree in a specific, systematic order. Unlike linear data structures which have a single obvious path from start to finish, trees offer multiple ways to "walk" through the data. The most common methods are categorized as Depth-First Search (DFS) strategies, which include in-order, pre-order, and post-order traversals. In an in-order traversal, the algorithm visits the left subtree, then the current node, and finally the right subtree. This specific sequence is significant because, in a BST, an in-order traversal visits the nodes in non-decreasing sorted order, which is extremely useful for generating reports or validating the tree's integrity.

Alternatively, pre-order traversal visits the current node first, followed by the left and right subtrees. This method is frequently used to create a copy of the tree or to serialize the structure into a format that can be easily reconstructed later. Because the root is always processed first, a pre-order list provides the necessary sequence to rebuild the exact same hierarchical shape. Post-order traversal, on the other hand, visits the children before the parent. This is the preferred method for deleting a tree or evaluating mathematical expressions represented by trees, as it ensures that "leaves" or sub-components are handled before the "roots" or aggregate operators that depend on them.

Beyond Depth-First strategies, Breadth-First Search (BFS), also known as level-order traversal, visits nodes level by level from top to bottom. This approach typically utilizes a queue data structure to keep track of the children of visited nodes. While DFS is often implemented with a simple recursive stack, BFS is more memory-intensive for wide trees but provides a clearer view of the tree's "breadth." Each of these traversal methods serves a unique purpose in software engineering, from finding the shortest path in a graph-like tree to optimizing memory usage during large-scale data processing tasks.

Complexity of Deletion in Search Trees

Deleting a node from a binary search tree is significantly more complex than insertion because it risks breaking the connectivity of the hierarchy. When a node is removed, the algorithm must identify a way to "patch" the hole while maintaining the BST invariant. The simplest scenario involves leaf nodes; since they have no children, they can be removed immediately by setting the parent's reference to null. The second scenario involves nodes with a single child. In this case, the child node is simply "promoted" to take the place of the deleted parent, similar to removing a link in a chain and connecting the remaining ends.

The most challenging scenario occurs when the node to be deleted has two children. We cannot simply promote one child, as that would leave the other child "orphaned" without a clear place in the hierarchy. To solve this, the algorithm finds the in-order successor (the smallest node in the right subtree) or the in-order predecessor (the largest node in the left subtree). This successor is guaranteed to have at most one child. The value of the successor is copied into the node targeted for deletion, and then the original successor node is removed from its old location using the simpler one-child or leaf-node deletion logic. This swap-and-delete strategy ensures the tree remains a valid BST with minimal restructuring.

Re-establishing structural integrity after a deletion is a "surgical" procedure that requires careful pointer manipulation. One common mistake in manual implementations is failing to correctly update the parent's reference to the newly promoted child, which can result in "memory leaks" or "dangling pointers" where parts of the tree become unreachable. High-level programming languages with garbage collection handle the memory aspects, but the logic of re-linking the nodes remains the developer's responsibility. Because of this complexity, deletion is often the operation that introduces the most bugs in custom data structure implementations.

Evaluating Binary Search Tree Time Complexity

The performance of a binary search tree is inextricably linked to its shape. In the best-case scenario, the tree is perfectly balanced, meaning for every node, the number of nodes in the left and right subtrees is roughly equal. In this state, the height of the tree is $O(\log n)$, where $n$ is the total number of nodes. Consequently, the binary search tree time complexity for search, insertion, and deletion is $O(\log n)$. This logarithmic performance is what makes BSTs so attractive for large datasets, as the number of operations grows very slowly even as the amount of data increases exponentially.

However, the worst-case scenario presents a significant risk. If data is inserted in sorted or nearly sorted order (e.g., 1, 2, 3, 4, 5), the tree becomes degenerate or skewed. In a skewed tree, each node has only one child, effectively turning the hierarchy into a linear linked list. In this unfortunate state, the height of the tree becomes $n$, and the time complexity for standard operations degrades to $O(n)$. This degradation negates the advantages of using a tree in the first place, as searching for the "last" element would require visiting every single node in the structure. The average-case performance remains $O(\log n)$ assuming random distribution of input, but the risk of $O(n)$ behavior is always present in a basic BST.

The following table summarizes the binary search tree time complexity for various operations compared to other common data structures:

| Operation | BST (Average) | BST (Worst) | Sorted Array | Linked List |

|---|---|---|---|---|

| Search | $O(\log n)$ | $O(n)$ | $O(\log n)$ | $O(n)$ |

| Insertion | $O(\log n)$ | $O(n)$ | $O(n)$ | $O(1)$ |

| Deletion | $O(\log n)$ | $O(n)$ | $O(n)$ | $O(1)$ |

The Necessity of Self-Balancing Mechanisms

Because the performance of a binary search tree is so dependent on its height, computer scientists developed self-balancing trees to prevent the occurrence of degenerate structures. A self-balancing tree automatically reorganizes its nodes during insertion and deletion to ensure that the height remains logarithmic relative to the number of nodes. Without these mechanisms, a production system might start fast but gradually slow down as specific data patterns cause the tree to become unbalanced. The most famous examples of these are AVL trees and Red-Black trees, both of which use complex internal rules to maintain structural equilibrium.

The AVL tree, named after its inventors Adelson-Velsky and Landis, maintains a strict balance factor for every node. The height difference between the left and right subtrees of any node can never exceed one. If an insertion or deletion violates this rule, the tree performs rotations. These rotations are algebraic rearrangements of the pointers that "lift" a lower node and "push" a higher node down, effectively decreasing the height of the heavy branch. While AVL trees provide faster lookups due to their strict balancing, they require more overhead during insertions and deletions to maintain that perfect state.

Red-Black trees offer a slightly more relaxed approach to balancing, which often results in better performance for write-heavy workloads. Instead of measuring height directly, they use a system of coloring nodes red or black and following a set of rules regarding the distribution of these colors. This ensures that the longest path from the root to a leaf is no more than twice as long as the shortest path. This "good enough" balance is sufficient to guarantee $O(\log n)$ time complexity while minimizing the number of rotations required during updates. Most modern standard libraries, such as the TreeMap in Java or std::map in C++, use Red-Black trees as their underlying implementation.

A Comprehensive Binary Search Tree Example

To visualize the logic of the binary search tree, consider the construction of a tree using the following dataset: 50, 30, 70, 20, 40, 60, 80. The first value, 50, becomes the root. When 30 arrives, it is compared to 50; since 30 is smaller, it becomes the left child. Next, 70 is compared to 50; since it is larger, it becomes the right child. As 20 and 40 arrive, they are compared to 50 (go left to 30) and then compared to 30 (20 goes left, 40 goes right). Finally, 60 and 80 are compared to 50 (go right to 70) and then compared to 70 (60 goes left, 80 goes right). The result is a perfectly balanced tree with a height of 2, allowing any value to be found in at most three steps.

class Node:

def __init__(self, key):

self.left = None

self.right = None

self.val = key

def insert(root, key):

if root is None:

return Node(key)

else:

if root.val < key:

root.right = insert(root.right, key)

else:

root.left = insert(root.left, key)

return root

# Example usage:

# root = Node(50)

# insert(root, 30)

# insert(root, 70)

Real-world applications of the binary search tree are found in database indexing and file systems. When you execute a query in a SQL database to find a specific user ID, the database doesn't scan the entire table row-by-row. Instead, it often traverses a B-tree or a variation of a balanced BST to locate the record in logarithmic time. This is why indexing columns is a crucial step in database optimization; it creates a searchable hierarchical structure that avoids the $O(n)$ cost of a full table scan. Similarly, many file systems use tree-based structures to organize directories and files on a disk, ensuring that even with millions of files, access remains near-instantaneous.

When comparing BSTs to linear data structures, the primary advantage is the trade-off between search and modification. A sorted array is excellent for searching but terrible for inserting new data, as it requires shifting all subsequent elements. A linked list is excellent for insertion but terrible for searching. The binary search tree provides a middle ground that excels in dynamic environments where data is constantly being added, removed, and queried. By leveraging hierarchical logic rather than linear sequencing, the BST remains one of the most efficient and conceptually elegant solutions for managing ordered data in computer science.

References

- Cormen, T. H., Leiserson, C. E., Rivest, R. L., & Stein, C., "Introduction to Algorithms", MIT Press, 2022.

- Knuth, D. E., "The Art of Computer Programming, Volume 3: Sorting and Searching", Addison-Wesley, 1998.

- Sedgewick, R., & Wayne, K., "Algorithms, 4th Edition", Addison-Wesley, 2011.

- Adelson-Velsky, G. M., & Landis, E. M., "An algorithm for the organization of information", Soviet Mathematics Doklady, 1962.

Recommended Readings

- Algorithms by Robert Sedgewick — A highly visual and practical guide to data structures that provides excellent Java-based implementations and performance analysis.

- Grokking Algorithms by Aditya Bhargava — An illustrated, friendly introduction to the logic of trees and big O notation that is perfect for building intuition.

- The Algorithm Design Manual by Steven S. Skiena — A comprehensive reference that treats data structures as tools for solving real-world engineering problems with a focus on selection and implementation.

- Purely Functional Data Structures by Chris Okasaki — For those interested in how binary search trees are implemented in functional languages like Haskell or Scala, focusing on immutability and persistence.