Binary Search Algorithm: Complete Guide with Examples, Code, and Analysis

Understanding Binary Search Fundamentals Core Definition and Principles The binary search algorithm is one of the most elegant and efficient techniques in computer science for locating a specific...

Understanding Binary Search Fundamentals

Core Definition and Principles

The binary search algorithm is one of the most elegant and efficient techniques in computer science for locating a specific value within a sorted collection of data. Rather than examining each element one by one, binary search repeatedly divides the search space in half, zeroing in on the target with remarkable speed. This divide-and-conquer philosophy is what separates binary search from brute-force approaches, and it underpins much of what makes efficient software possible. Donald Knuth, in his landmark work The Art of Computer Programming (1968), described binary search as deceptively simple to state yet surprisingly tricky to implement correctly — a characterization that has stood the test of time.

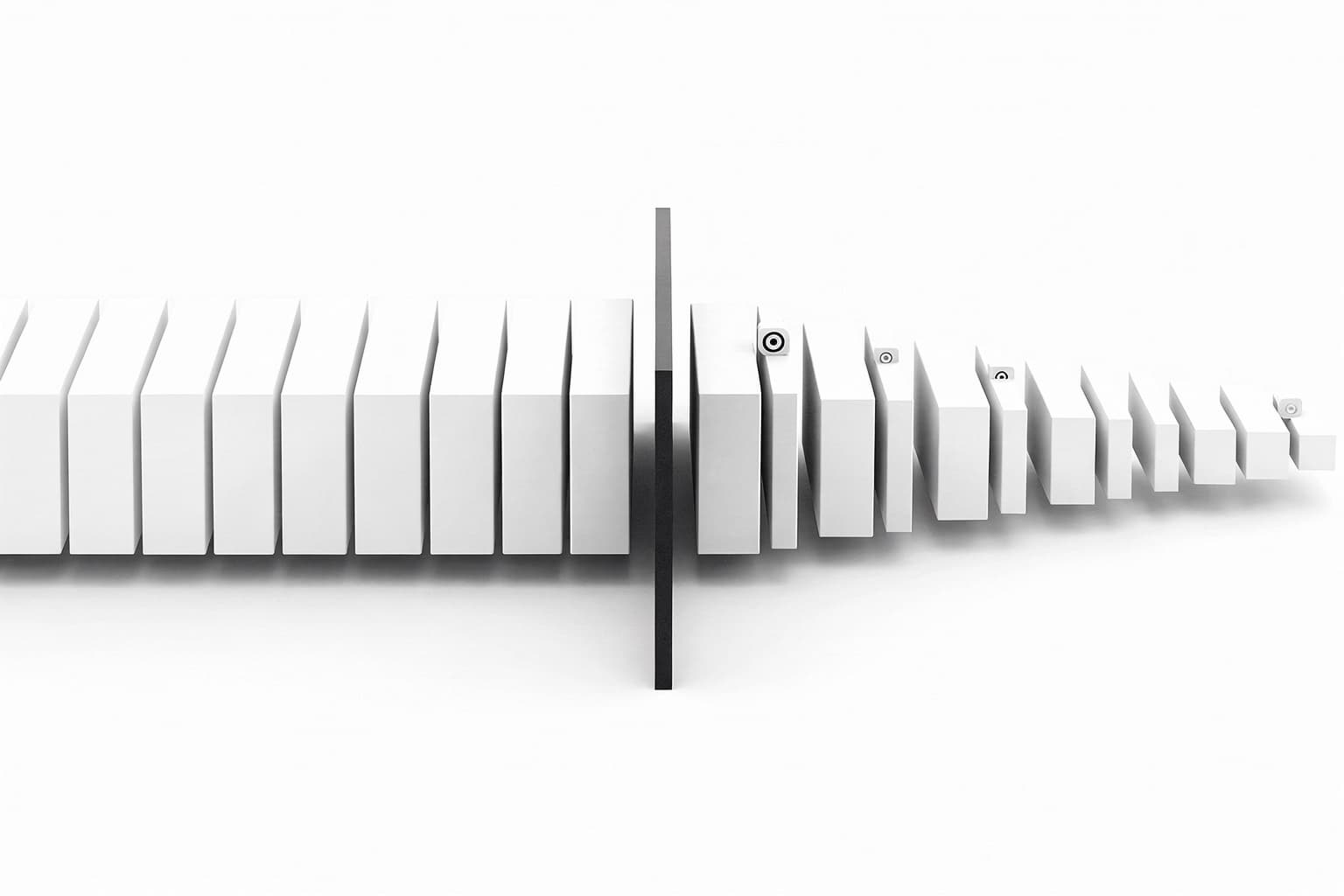

At its core, the algorithm works by maintaining two pointers — a low index and a high index — that define the current search region within the array. On each iteration, it calculates the midpoint of this region and compares the element at that midpoint to the target value. If the midpoint element matches the target, the search is complete. If the target is smaller than the midpoint element, the algorithm discards the right half of the search region; if larger, it discards the left half. This systematic halving continues until either the target is found or the search region collapses to nothing, signaling that the target is absent from the array.

The power of this approach becomes apparent when you consider the scale at which it operates. A sorted array of one billion elements requires at most 30 comparisons to locate any value using binary search, since log₂(1,000,000,000) ≈ 30. This is a profound result: the number of operations needed grows only logarithmically with the size of the input, making binary search practical even for massive datasets where linear scanning would be prohibitively slow. This logarithmic growth rate places binary search in the category of algorithms that scale gracefully, a property engineers value enormously in production systems.

Prerequisites: Sorted Arrays Only

Binary search carries one non-negotiable prerequisite: the data must be sorted in a consistent order, either ascending or descending, before the algorithm can operate correctly. This constraint is not a minor footnote — it is the foundational assumption that makes the entire halving strategy valid. When you discard the left half of the search space because the target is greater than the midpoint, you are implicitly trusting that all elements to the left are smaller. If the array were unsorted, that assumption would break, and the algorithm would silently return incorrect results without any error to alert you. This is one of the most common sources of bugs when developers first implement binary search.

In practice, the sorting prerequisite means that binary search is best suited for static or infrequently modified datasets where a one-time sort pays dividends across many subsequent searches. Sorting an array of n elements typically costs O(n log n) time — for example, using merge sort or Timsort — so if you only need to search once, a simple linear scan at O(n) may actually be cheaper overall. However, when you need to perform hundreds or thousands of searches against the same data, the upfront sorting cost amortizes quickly and binary search becomes the clear winner. Database index structures like B-trees, binary search trees, and sorted inverted indexes all exploit this same principle at architectural scale.

How Binary Search Works

Step-by-Step Algorithm Process

Walking through the binary search algorithm step by step reveals just how systematic and clean its logic is. The process begins by setting low = 0 and high = n - 1, where n is the number of elements in the array. These two variables define the active search window. The algorithm then enters a loop that continues as long as low is less than or equal to high — meaning there is still at least one element left to examine. This loop condition is critical: using strictly less than instead of less-than-or-equal would cause the algorithm to miss the last remaining candidate element, a subtle off-by-one error that has tripped up even experienced programmers.

Inside each loop iteration, the algorithm computes the midpoint index and retrieves the element at that position. Three outcomes are possible: the element equals the target (success — return the index), the element is less than the target (move low to mid + 1, shifting the window right), or the element is greater than the target (move high to mid - 1, shifting the window left). Each of these outcomes either terminates the search or reduces the search space by roughly half. If the loop exits without finding the target — meaning low has exceeded high — the algorithm returns a sentinel value, commonly -1, to indicate that the target is not present in the array.

Midpoint Calculation Technique

The midpoint calculation deserves special attention because the naive formulation contains a subtle but serious bug. The intuitive formula mid = (low + high) / 2 looks correct, but it can cause an integer overflow in languages like C, C++, and Java when low and high are both large integers — specifically when their sum exceeds the maximum value representable by a 32-bit integer (2,147,483,647). This overflow bug went undetected in Java's standard library implementation for nearly a decade before Joshua Bloch documented it in a widely read 2006 blog post. The fix is to compute the midpoint as mid = low + (high - low) / 2, which is mathematically equivalent but avoids the overflow entirely by never adding the two indices together.

In Python, integer overflow is not a concern because the language uses arbitrary-precision integers, but the corrected formula remains the idiomatic choice in most educational and professional contexts because it documents awareness of the overflow issue. In lower-level languages, some developers prefer the bitwise variant mid = (low + high) >>> 1 (in Java) which uses an unsigned right shift to handle the carry bit correctly. Understanding these nuances is part of what distinguishes a programmer who has studied algorithms deeply from one who has only used them superficially. The midpoint calculation is a small detail with large consequences, and it illustrates a recurring theme in algorithm implementation: the devil lives in the boundary conditions.

Search Path Decision Logic

The decision logic after each midpoint comparison is what drives the algorithm's convergence. When the algorithm moves low to mid + 1, it is asserting that the target — if it exists — must lie strictly to the right of mid in the array. Similarly, setting high to mid - 1 asserts the target must lie strictly to the left. The +1 and -1 adjustments are essential because they ensure the midpoint itself is excluded from the next search window; without them, the algorithm could get stuck in an infinite loop examining the same midpoint repeatedly when the search window contains exactly two elements. This careful bookkeeping is what guarantees the algorithm always makes progress and terminates in finite time.

Binary Search Example Walkthrough

Manual Example with Numbers

Consider a sorted array: [2, 5, 8, 12, 16, 23, 38, 45, 56, 72, 91], containing 11 elements indexed from 0 to 10. Suppose the target is 23. Initially, low = 0 and high = 10, so the midpoint is mid = 0 + (10 - 0) / 2 = 5. The element at index 5 is 23 — an immediate match on the very first comparison. This best-case scenario, while satisfying, is not representative; in practice, the target is rarely sitting at the exact midpoint, and a few rounds of elimination are typically required.

Now suppose the target is instead 56. In the first iteration, mid = 5 and array[5] = 23. Since 56 > 23, the algorithm sets low = 6, discarding the entire left half. In the second iteration, the search window is indices 6 through 10, giving mid = 6 + (10 - 6) / 2 = 8 and array[8] = 56. The target is found in just two comparisons across an eleven-element array — a vivid demonstration of how quickly binary search narrows down the candidate range. Even if the array had 10,000 elements, the search would terminate in at most 14 comparisons.

Handling Edge Cases

Edge cases reveal the robustness of a binary search implementation. Searching for a value smaller than the smallest element in the array causes high to shrink below low after just a few iterations, and the loop exits correctly returning "not found." Searching for a value larger than the largest element has the symmetric behavior: low climbs above high. Searching an array of a single element is also clean — the single comparison either matches or causes one of the pointers to cross the other, and the algorithm terminates after one step. The only edge case that requires explicit handling before the algorithm runs is an empty array, where high would be initialized to -1 (since n - 1 = -1 for n = 0), making the loop condition immediately false and returning "not found" without any comparisons.

Duplicate elements introduce an interesting nuance: the standard binary search algorithm will find some occurrence of the target, but not necessarily the first or last one. If the requirement is to find the leftmost or rightmost occurrence of a value — a common need when working with sorted arrays that permit repeated entries — a modified version of the algorithm must be used. These variants, sometimes called lower bound and upper bound searches (mirroring the C++ STL functions std::lower_bound and std::upper_bound), adjust the decision logic to keep searching even after finding a match, converging on the boundary occurrence. This extension is worth understanding because it appears frequently in competitive programming and interval-based query problems.

Binary Search Time Complexity

Big O Notation Breakdown

The time complexity of the binary search algorithm is O(log n), where n is the number of elements in the array. To understand why, consider that each comparison halves the search space. Starting from n elements, after one comparison there are at most n/2 candidates, after two there are at most n/4, and after k comparisons there are at most n / 2^k candidates remaining. The algorithm terminates when the search space reaches size 1, which happens when n / 2^k = 1, meaning k = log₂(n). Therefore, the maximum number of comparisons is:

$$k = \lfloor \log_2(n) \rfloor + 1$$

This logarithmic growth is what makes binary search so powerful at scale. For a dataset of size n = 1,024, the worst case requires 10 comparisons. For n = 1,048,576 (one million), it requires 20 comparisons. For n = 1,073,741,824 (one billion), just 30 comparisons suffice. The gap between O(log n) and O(n) is not merely theoretical — it translates directly into orders-of-magnitude differences in execution time for large arrays. In a world where arrays commonly contain millions of records, this distinction is operationally significant.

Best, Average, Worst Cases

The best case for binary search occurs when the target is located at the midpoint of the initial array, resulting in a single comparison and O(1) time. This happens, for instance, when searching for the median value in a sorted array. The worst case occurs when the target is not present in the array, or when it sits at one of the extreme ends, requiring the search space to be halved all the way down to a single element before conclusion — costing O(log n) comparisons. The average case is also O(log n), because even in typical random-target scenarios, the expected number of comparisons is proportional to the logarithm of the array size. Unlike some algorithms where the average case is dramatically better than the worst case, binary search's best and worst cases are relatively close, which is a desirable property for systems that require predictable performance.

Space Complexity Details

The iterative version of binary search uses O(1) auxiliary space — it maintains only a fixed number of variables (low, high, mid) regardless of the input size, making it exceptionally memory-efficient. The recursive version, by contrast, uses O(log n) stack space because each recursive call adds a new frame to the call stack, and the maximum depth of recursion is log₂(n). For most practical inputs, this difference is negligible — log₂(10^9) ≈ 30 stack frames is trivial. However, in deeply embedded systems with very limited stack memory, or in environments where stack overflow is a real concern, the iterative approach is the safer and more conventional choice. This is why most standard library implementations of binary search, including Python's bisect module and Java's Arrays.binarySearch, use the iterative form.

Iterative Binary Search Implementation

Pseudocode Structure

Before diving into working code, pseudocode provides a language-agnostic blueprint that isolates the algorithm's logic from syntactic noise. The iterative binary search pseudocode reads cleanly: initialize low to 0 and high to the last index, then loop while low ≤ high. Compute mid, compare array[mid] to the target, and update either low or high accordingly, or return mid on a match. If the loop ends without a match, return -1. This pseudocode maps almost directly to any imperative programming language, which is part of what makes binary search such a clean teaching example — the algorithm and its implementation are unusually close together.

Python Code Example

The following Python implementation follows the iterative pattern precisely, using the overflow-safe midpoint formula. It accepts a sorted list and a target value, returning the index of the target if found or -1 if absent.

def binary_search(arr, target):

"""

Iterative binary search.

Returns the index of target in arr if found, otherwise -1.

Assumes arr is sorted in ascending order.

"""

low = 0

high = len(arr) - 1

while low <= high:

mid = low + (high - low) // 2 # Overflow-safe midpoint

if arr[mid] == target:

return mid # Target found at index mid

elif arr[mid] < target:

low = mid + 1 # Discard left half

else:

high = mid - 1 # Discard right half

return -1 # Target not present in array

# Example usage

sorted_array = [2, 5, 8, 12, 16, 23, 38, 45, 56, 72, 91]

print(binary_search(sorted_array, 23)) # Output: 5

print(binary_search(sorted_array, 56)) # Output: 8

print(binary_search(sorted_array, 99)) # Output: -1

print(binary_search(sorted_array, 2)) # Output: 0

print(binary_search(sorted_array, 91)) # Output: 10

Analysis of Loop Efficiency

The iterative implementation is lean and direct. Each iteration of the while loop performs exactly one comparison against the target, plus pointer arithmetic to update low or high. These operations are all constant-time, so the total cost of the loop is proportional to the number of iterations, which is bounded by ⌊log₂(n)⌋ + 1. There is no overhead from function call setup, no risk of stack overflow, and no memory allocation beyond a handful of integer variables. In performance-critical applications — say, a search function called millions of times per second in a trading system or a database query engine — this tight inner loop matters. Many modern processors can also optimize it aggressively: the loop body is small, branch-predictable for most workloads, and cache-friendly since accessing array elements by index is a simple address calculation.

Python's built-in bisect module, part of the standard library since Python 2, provides a highly optimized binary search implementation written in C. The bisect.bisect_left function returns the leftmost insertion point for a target value, effectively performing a lower-bound search. For production Python code, bisect is almost always preferable to a custom implementation because it is faster, tested, and immediately recognizable to other Python programmers. Understanding how to write binary search from scratch remains essential for interviews and low-level work, but recognizing when to use a battle-tested library implementation is equally part of professional software development.

Recursive Binary Search Implementation

Recursive Function Logic

The recursive formulation of binary search expresses the algorithm's divide-and-conquer nature more explicitly than the iterative version, at the cost of call stack overhead. The function takes the array, the target, and the current low and high bounds as parameters. The base case occurs when low > high, meaning the search space is empty and the target is not present; the function returns -1. The recursive case computes the midpoint, checks for a match, and then calls itself on either the left or right subarray depending on the comparison result. Each recursive call reduces the problem size by roughly half, ensuring the recursion terminates after at most log₂(n) levels.

One important design decision in the recursive version is whether to pass the actual subarray (a new slice of the list) or to pass index bounds into the original array. Passing slices is simpler to read but creates new list objects at each level of recursion, raising the space complexity to O(n) — an unnecessary cost. Passing index bounds avoids all memory allocation and keeps space complexity at O(log n) for the call stack alone. The index-bound approach is the standard in practice, even though it makes the function signature slightly more complex, because it respects the principle that the algorithm should not pay for memory it does not need.

Code in Python

The following Python implementation uses the index-bound approach, making the recursive structure explicit while avoiding unnecessary memory allocation.

def binary_search_recursive(arr, target, low, high):

"""

Recursive binary search.

Returns the index of target in arr if found, otherwise -1.

Initial call: binary_search_recursive(arr, target, 0, len(arr) - 1)

"""

# Base case: search space is empty

if low > high:

return -1

mid = low + (high - low) // 2 # Overflow-safe midpoint

if arr[mid] == target:

return mid # Target found

elif arr[mid] < target:

return binary_search_recursive(arr, target, mid + 1, high) # Search right half

else:

return binary_search_recursive(arr, target, low, mid - 1) # Search left half

# Convenience wrapper so callers don't need to pass initial bounds

def binary_search(arr, target):

return binary_search_recursive(arr, target, 0, len(arr) - 1)

# Example usage

sorted_array = [2, 5, 8, 12, 16, 23, 38, 45, 56, 72, 91]

print(binary_search(sorted_array, 23)) # Output: 5

print(binary_search(sorted_array, 56)) # Output: 8

print(binary_search(sorted_array, 99)) # Output: -1

Recursion Depth Considerations

Python imposes a default recursion limit of 1,000 levels (adjustable via sys.setrecursionlimit), which means the recursive binary search function will fail with a RecursionError for any array with more than approximately 2^999 elements — a number so astronomically large it will never appear in practice. For Python specifically, the recursion depth is not a practical concern for binary search. In languages without tail-call optimization, however, the recursive version does incur measurable overhead compared to the iterative version: each function call requires setting up a new stack frame, passing arguments, and tearing the frame down on return. In languages like Scheme or Haskell, which do support tail-call optimization, the recursive and iterative versions compile to essentially equivalent machine code, making the choice purely stylistic.

The recursive implementation is often preferred in academic settings because it mirrors the mathematical definition of binary search as a recurrence relation: T(n) = T(n/2) + O(1), with the base case T(1) = O(1). Solving this recurrence via the Master Theorem immediately yields T(n) = O(log n), providing a formal proof of the algorithm's complexity. This connection between recursive code and recurrence relations is one of the reasons computer science curricula emphasize recursive thinking — it bridges clean code and rigorous analysis in a way that iterative code does not.

Binary Search vs Linear Search

Key Differences Compared

Linear search, also called sequential search, scans through an array element by element from the beginning until it finds the target or exhausts the array. It requires no preprocessing — the array can be in any order — and its implementation is trivially simple. Binary search, by contrast, demands a sorted array but rewards that constraint with dramatically faster searches. For small arrays (typically fewer than 10 to 20 elements), the difference is negligible, and linear search may even be faster in practice due to its simpler logic and better cache behavior on tiny data. As arrays grow into the thousands or millions of elements, however, the O(n) vs O(log n) gap becomes profound and practically decisive.

Another important distinction is adaptability. Linear search works on any data structure that supports sequential access — arrays, linked lists, streams, generators — whereas binary search requires random access (the ability to jump directly to index mid in O(1) time). This makes binary search inherently tied to array-like structures and unsuitable for linked lists without modification. Additionally, if the dataset changes frequently — elements are inserted or deleted regularly — maintaining the sorted order required by binary search introduces overhead. In such dynamic scenarios, balanced binary search trees (like AVL trees or red-black trees) or skip lists provide O(log n) search while also supporting efficient updates, offering the best of both worlds.

Performance Metrics Table

The following table summarizes the key performance and usability characteristics of binary search versus linear search across the most important dimensions.

| Characteristic | Binary Search | Linear Search |

|---|---|---|

| Time Complexity (Best Case) | O(1) | O(1) |

| Time Complexity (Average Case) | O(log n) | O(n) |

| Time Complexity (Worst Case) | O(log n) | O(n) |

| Space Complexity (Iterative) | O(1) | O(1) |

| Space Complexity (Recursive) | O(log n) | O(n) |

| Prerequisite | Sorted array required | None — works on unsorted data |

| Data Structure Requirement | Random access (array) | Sequential access sufficient |

| Implementation Complexity | Moderate (boundary logic required) | Trivial |

| Comparisons for n = 1,000,000 | ~20 | Up to 1,000,000 |

| Suitable for Dynamic Data? | No (requires re-sorting on update) | Yes |

Choosing the Right Method

The choice between binary search and linear search is ultimately a question of context and constraints. When the data is already sorted — or when sorting it once and searching it many times — binary search is almost universally the right choice for arrays of non-trivial size. The standard rule of thumb among practitioners is that once an array exceeds roughly 50 elements and is searched repeatedly, the case for binary search becomes compelling on performance grounds alone. For one-off searches of small arrays, linear search's simplicity and freedom from sorting requirements make it entirely appropriate, and no engineer should feel compelled to sort data just to use the fancier algorithm.

In real-world systems, binary search appears not just as a standalone function but as a conceptual building block. Database engines use binary search on sorted index pages to locate rows in O(log n) disk reads. The git bisect command uses binary search across a commit history to identify which commit introduced a bug — a beautifully practical application of the same halving logic. Version control, DNS lookups, autocomplete systems, and IP routing tables all exploit sorted data and binary or logarithmic search strategies at their cores. Understanding the binary search algorithm deeply — not just its code, but its logic, its limitations, and its complexity guarantees — is therefore not an academic exercise. It is foundational knowledge for anyone who designs or analyzes software at scale.