Big O Notation: Complete Guide to Time and Space Complexity for Beginners

Big O notation provides a mathematical framework for analyzing the efficiency of algorithms by describing their time and space complexity in terms of input size. This guide offers a complete...

Big O notation provides a mathematical framework for analyzing the efficiency of algorithms by describing their time and space complexity in terms of input size. This guide offers a complete beginner-friendly explanation of big o notation, including definitions, common notations, real-world examples, and analysis techniques. Whether you're preparing for coding interviews or studying computer science, understanding big o notation explained helps evaluate algorithm performance beyond hardware specifics. From constant O(1) to quadratic O(n²), this article covers time complexity big o, space complexity explained, and more, with a big o notation cheat sheet for quick reference.

What is Big O Notation?

Definition of Big O Notation

Big O notation formally describes the upper bound of an algorithm's running time or space requirements as a function of the input size n, ignoring constants and lower-order terms. Introduced by German mathematician Paul Bachmann in 1894 and popularized by Edmund Landau in 1909, it belongs to a family of asymptotic notations including Big Ω (lower bound) and Big Θ (tight bound). Mathematically, a function f(n) is O(g(n)) if there exist positive constants c and n₀ such that for all n ≥ n₀,

$$f(n) \leq c \cdot g(n).$$

This captures the worst-case growth rate, essential for comparing algorithms scalably. For instance, if an algorithm takes 5n² + 3n steps, its Big O is O(n²), focusing on the dominant quadratic term.

Why Big O Notation Matters for Programmers

Big O notation abstracts away machine-dependent factors like processor speed or language optimizations, allowing programmers to predict scalability for large inputs. In software engineering, it guides choices between algorithms: a O(n log n) sort outperforms O(n²) for millions of records, as seen in database queries or machine learning datasets. Donald Knuth, in his 1976 book "The Art of Computer Programming," emphasized its role in algorithm design. Interviews at companies like Google prioritize Big O analysis to assess problem-solving under scale.

Real-world applications abound: search engines use O(log n) indexing for billions of pages, while social networks employ O(1) graph traversals for friend recommendations. Without it, optimizations remain anecdotal.

Dropping Constants and Lower-Order Terms

A core principle of Big O simplifies analysis by discarding multiplicative constants and non-dominant terms. Consider T(n) = 3n² + 100n + 50; as n grows, n² dominates, so T(n) = O(n²). Formally, divide by the highest-degree term: constants like 3 become irrelevant since c=4 bounds it for large n.

This "drops" practice extends to space: O(4n + log n) simplifies to O(n). Analogy: like describing a rocket's fuel needs by distance, ignoring minor leaks—focus on the journey's scale.

Time Complexity Big O Explained

What is Time Complexity?

Time complexity quantifies the computational steps an algorithm requires relative to input size n, expressed via Big O. It models execution time asymptotically, assuming unit cost per operation. For loops, count iterations; for recursion, solve recurrences. In "Introduction to Algorithms" (CLRS, 3rd ed., 2009) by Cormen et al., time complexity underpins empirical testing.

Example: Summing an array of n elements takes Θ(n) time—linear in n. Hardware variances (e.g., cache misses) are ignored for theoretical purity.

How Big O Measures Algorithm Efficiency

Big O provides an upper limit on growth, prioritizing worst-case for guarantees. Efficiency tiers from O(1) (instant) to exponential O(2n) (infeasible for n=50). Plot growth: for n=106, O(n) is 1 million operations (seconds), O(n²) is 1012 (years on a GHz CPU).

- Scalability insight: Doubling input doubles time for O(n), quadruples for O(n²).

- Practical tip: Profile small n empirically, extrapolate with Big O.

Best, Average, and Worst-Case Scenarios

Algorithms exhibit varying behaviors:

- Best-case O: Minimum steps, e.g., sorted input for insertion sort (O(n)).

- Average-case: Expected over random inputs, often Θ.

- Worst-case O: Maximum, focus for reliability (e.g., reverse-sorted bubble sort O(n²)).

Amortized analysis, like hash tables' O(1) average, balances rare spikes. Quickselect's average O(n) vs. worst O(n²) illustrates pivot choice's impact.

Space Complexity Explained

Understanding Space or Memory Usage

Space complexity measures memory as a function of n, including input, output, and auxiliary space. Critical for embedded systems or big data, where RAM limits scale. Like time, Big O drops constants: an array of n integers is O(n), ignoring 4 bytes per int.

In recursion, stack space adds O(depth); tail recursion optimizes to O(1). Modern JVMs garbage-collect, but leaks amplify poor complexity.

Auxiliary Space vs. Input Space

Total space = input + auxiliary + output. Auxiliary space is extra workspace, excluding input. In-place sorts like heapsort use O(1) aux; mergesort needs O(n).

| Algorithm | Total Space | Aux Space |

|---|---|---|

| Array Sum | O(n) | O(1) |

| Mergesort | O(n) | O(n) |

| Quicksort (in-place) | O(n) | O(log n) |

Calculating Space Complexity Examples

Python Fibonacci recursive: O(n) stack + O(n) memo if dynamic. Iterative: O(1) vars.

def fib(n):

if n <= 1: return n

return fib(n-1) + fib(n-2) # Space: O(n) recursion depth

Hash table: O(n) for n entries, assuming load factor <1.

Common Big O Notations

O(1) - Constant Time

O(1) performs fixed operations regardless of n. Array access by index or hash lookup. Analogy: dictionary word lookup—instant.

let arr = [1,2,3];

console.log(arr[0]); // O(1)

O(log n) - Logarithmic Time

Each step halves search space, e.g., binary search on sorted array. Growth: log₂(109) ≈ 30 steps. Balanced BST operations.

O(n) - Linear Time

Single pass: linear search, array print. Doubling n doubles time.

O(n log n) - Linearithmic

Optimal comparison sorts (mergesort, heapsort). log n per element. Industry standard for n=106: ~20n operations.

O(n²) - Quadratic and Higher

Nested loops: bubble sort. Fine for n=1000 (1M ops), disastrous for n=105. O(n³) matrix multiply naive; Strassen's O(n2.807) improves.

Big O Notation Examples

Array Traversal Example

Sum array: one loop, O(n) time, O(1) space.

def array_sum(arr):

total = 0

for num in arr: # n iterations

total += num

return total # Time: O(n), Space: O(1)

Binary Search Example

Halves interval: O(log n).

public int binarySearch(int[] arr, int target) {

int left = 0, right = arr.length - 1;

while (left <= right) { // log n steps

int mid = left + (right - left) / 2;

if (arr[mid] == target) return mid;

else if (arr[mid] < target) left = mid + 1;

else right = mid - 1;

}

return -1; // Time: O(log n), Space: O(1)

}

Bubble Sort vs. Merge Sort

Bubble: O(n²) nested swaps.

def bubble_sort(arr):

n = len(arr)

for i in range(n): # O(n)

for j in range(0, n-i-1): # O(n)

if arr[j] > arr[j+1]:

arr[j], arr[j+1] = arr[j+1], arr[j]

# Time: O(n²), Space: O(1)

Merge: Divide-conquer, O(n log n) time, O(n) space. For n=106, bubble ~1012 ops (impossible), merge ~2x107 (fast).

Big O Notation Cheat Sheet

Quick Reference Table for Complexities

| Notation | Name | Operations (n=106) | Examples |

|---|---|---|---|

| O(1) | Constant | 1 | Hash access |

| O(log n) | Logarithmic | 20 | Binary search |

| O(n) | Linear | 1M | Loop traversal |

| O(n log n) | Linearithmic | 20M | Mergesort |

| O(n²) | Quadratic | 1012 | Bubble sort |

| O(2n) | Exponential | Infinite | Subset sum |

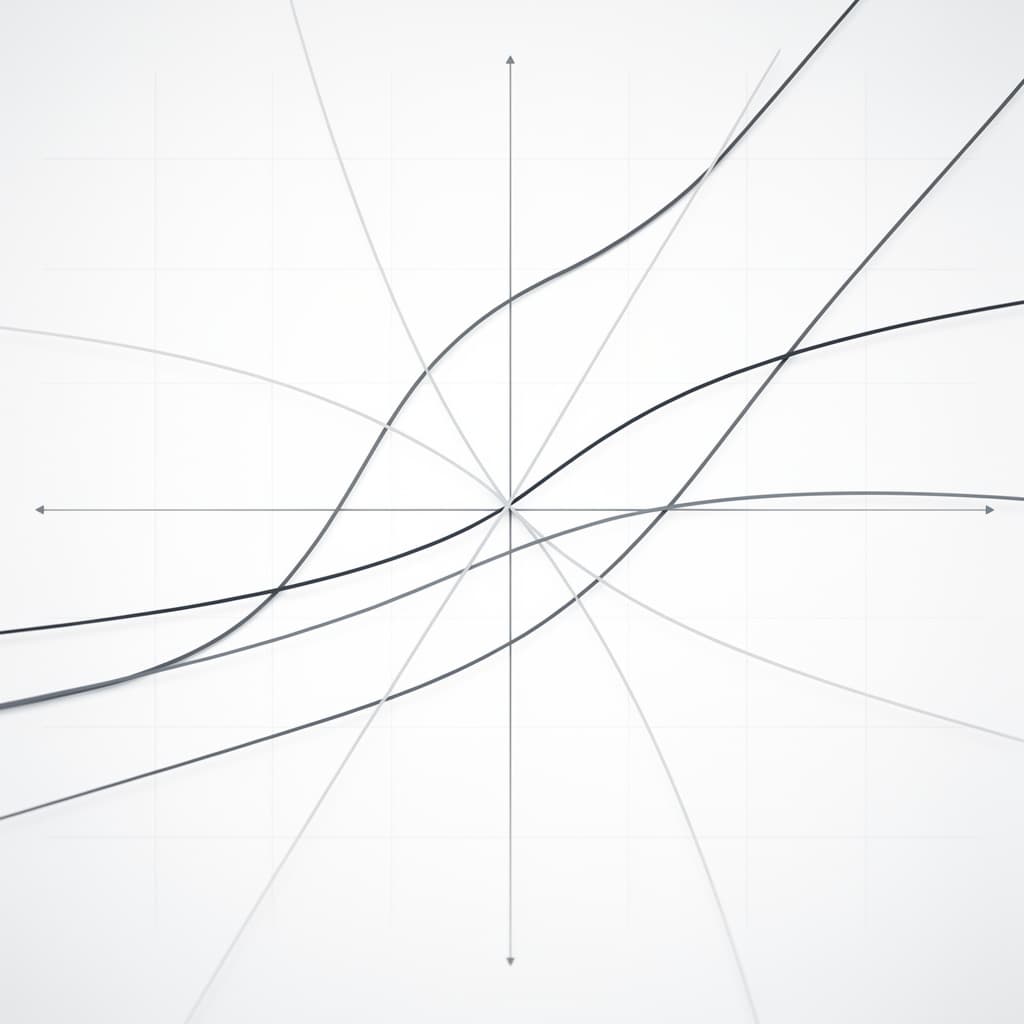

Visual Growth Rate Comparisons

Order from best to worst: O(1) < O(log n) < O(n) < O(n log n) < O(n²) < O(nk) < O(2n). For n=100: O(n²)=10k (ok), n=10k: 100M (slow).

Tips for Memorizing Common Notations

- Loops: Single=O(n), nested=O(n²).

- Divide-half: +log n.

- Mnemonics: "Log for binary, quad for pairs."

How to Analyze Big O Complexity

Step-by-Step Algorithm Analysis

- Identify basic operations (e.g., comparisons).

- Count per input size: loops multiply.

- Highest term dominates.

- Sum: T(n) = Σ.

Example: Two loops, one O(n), one O(n²): O(n²).

Recursion and Big O

Recurrences: T(n) = T(n-1) + 1 = O(n). Tree method: count levels x branches.

def factorial(n):

if n == 0: return 1

return n * factorial(n-1) # T(n) = T(n-1) + O(1) = O(n)

Master Theorem Basics

For T(n) = a T(n/b) + f(n), compare f(n) to nlog_b a:

- If f(n) = O(nlog_b a - ε): T(n)=Θ(nlog_b a).

- If f(n)=Θ(nlog_b a): Θ(nlog_b a log n).

- If f(n)=Ω(nlog_b a + ε): Θ(f(n)).

Mergesort: a=2,b=2,f(n)=O(n) → case 2, O(n log n).

Frequently Asked Questions (FAQ)

What is the difference between Big O, Omega, and Theta?

Big O: upper bound ≤. Big Ω: lower ≥. Big Θ: tight both. E.g., mergesort Θ(n log n) all cases.

Is Big O only for time complexity?

No, applies to space, I/O too. Primarily time/space in algorithms.

How does Big O help in interviews?

Demonstrates scalability thinking; analyze LeetCode problems on-the-fly.

Key Takeaways

Master big o notation to select efficient algorithms: prioritize O(n log n) over O(n²), analyze via loops/recurrences. Use the big o notation cheat sheet for reference, practice with big o notation examples. Common pitfalls: forgetting recursion space, ignoring aux. With tools like Master Theorem, derive complexities confidently for real-world coding challenges.