The Dynamic Logic of Chemical Equilibrium

The concept of chemical equilibrium represents one of the most profound realizations in the history of physical science, marking the transition from a static view of matter to a dynamic,...

The concept of chemical equilibrium represents one of the most profound realizations in the history of physical science, marking the transition from a static view of matter to a dynamic, probabilistic understanding of molecular behavior. For much of the early history of chemistry, reactions were viewed as one-way streets where reactants were simply "consumed" until they vanished, leaving behind a finished product. However, as experimental precision improved, scientists began to observe that many reactions appeared to "stall" mid-way, leaving a mixture of both starting materials and products. This observation led to the understanding that chemical change is often a two-way process, where the forward and reverse transformations occur simultaneously. At the point of equilibrium, these competing processes achieve a state of perfect balance, where the macroscopic properties of the system—such as color, pressure, and concentration—remain constant even though the microscopic activity continues unabated.

The Foundations of Reversibility

The Nature of Forward and Reverse Rates

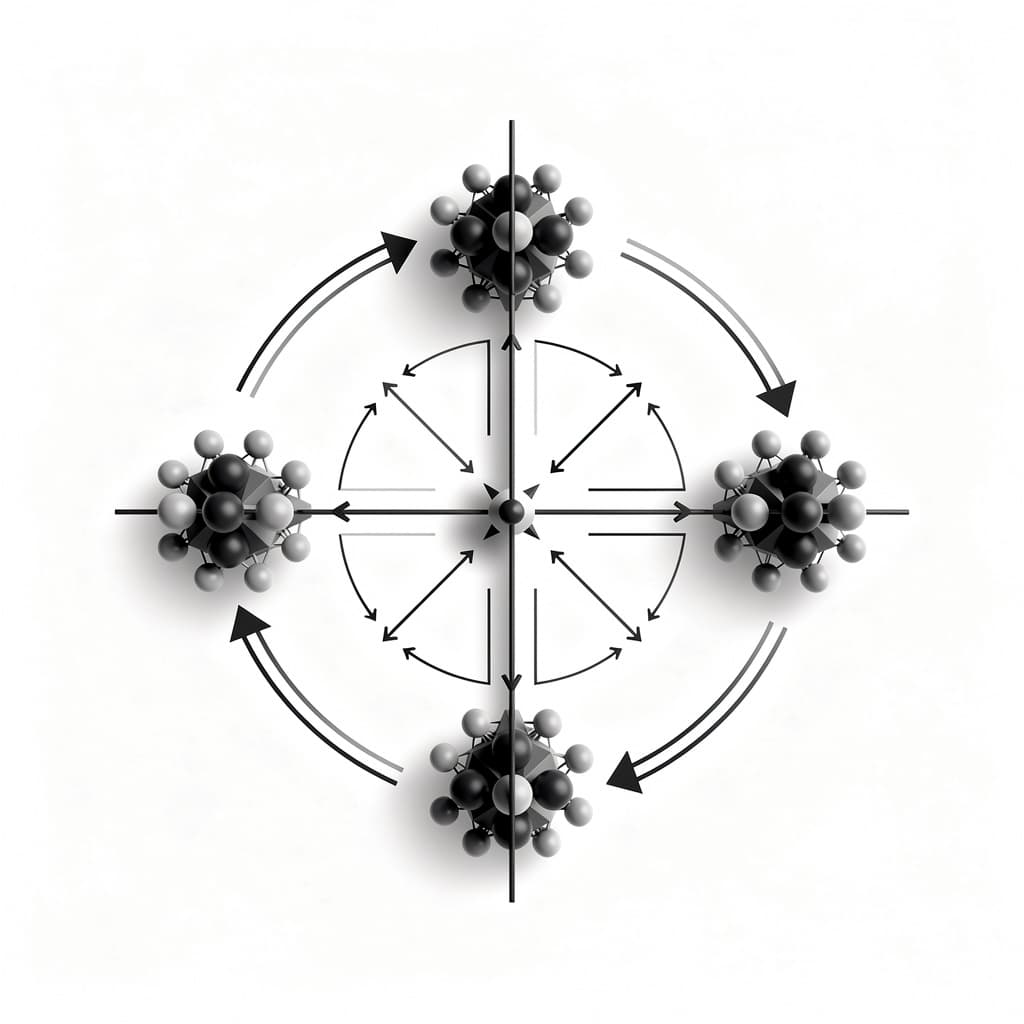

To understand equilibrium, one must first embrace the concept of reversible reactions, which are chemical processes that can proceed in both forward and backward directions. In a forward reaction, reactant molecules collide with sufficient energy and correct orientation to break existing bonds and form new ones, resulting in products. Simultaneously, as product molecules accumulate, they too begin to collide with one another, occasionally undergoing the reverse process to regenerate the original reactants. The rate of these reactions is governed by the collision theory, which posits that the frequency and energy of molecular impacts determine how quickly a transformation occurs. Initially, the forward rate is high due to the abundance of reactants, while the reverse rate is zero; as the reaction progresses, the forward rate decreases and the reverse rate increases until they eventually meet.

The realization that reactions could be reversed was famously documented by Claude Louis Berthollet in 1799 during his travels to Egypt. He observed the formation of sodium carbonate crusts on the shores of salt lakes, a phenomenon that seemed to contradict the established affinity laws of the time. According to the laboratory knowledge of the era, calcium carbonate and sodium chloride should not react to form sodium carbonate and calcium chloride, yet the high concentration of salt in the lake pushed the "impossible" reverse reaction forward. This led Berthollet to conclude that the mass or concentration of substances plays a decisive role in the direction of chemical change. Modern chemistry formalizes this by stating that for any reversible system, a point exists where the forward rate $r_f$ and the reverse rate $r_r$ are exactly equal, meaning that for every molecule of product formed, another is converted back into reactant.

Identifying Closed and Open Systems

A critical requirement for achieving a true state of chemical equilibrium is the presence of a closed system. In a closed system, energy may be exchanged with the surroundings, but matter is contained, ensuring that no reactants or products can escape. If a reaction produces a gas, such as carbon dioxide, in an open container, that gas will diffuse into the atmosphere, preventing the reverse reaction from occurring because the necessary "building blocks" are no longer present. Consequently, open systems typically drive reactions to completion, following a linear path rather than an oscillatory or balanced one. In the laboratory, we often use sealed flasks or pressurized vessels to simulate these conditions, allowing us to study the inherent stability of the chemical mixture without external interference.

Conversely, in an open system, such as a campfire or a flowing stream, equilibrium is rarely achieved in the classical sense; instead, these systems may reach a "steady state" where the input of material equals the output. While a steady state might appear constant to the naked eye, it differs fundamentally from equilibrium because it requires a continuous flux of energy and matter to maintain. In a true equilibrium state, the stability is intrinsic and does not require external work to persist once established. Understanding this distinction is vital for industrial chemists who must decide whether to trap products to reach a specific balance or to continuously remove them to prevent the reverse reaction from reclaiming the desired yield. By controlling the "closure" of the system, scientists can manipulate whether a reaction behaves as a permanent transformation or a flexible, dynamic balance.

Defining the Dynamic State

Microscopic Motion versus Macroscopic Stability

The term dynamic equilibrium is often used to emphasize that the apparent stillness of a system at equilibrium is a macroscopic illusion. If we were to shrink down to the molecular level, we would witness a scene of chaotic activity: molecules are constantly colliding, bonds are snapping, and new structures are emerging at incredible speeds. However, because the rate of the forward reaction perfectly offsets the rate of the reverse reaction, the total number of reactant and product molecules remains unchanged over time. It is helpful to imagine two people on adjacent treadmills moving at the exact same speed but in opposite directions; while they are running vigorously, their positions relative to the room remain fixed. In chemistry, this means that properties like molarity, partial pressure, and temperature remain flatlines on a graph, despite the underlying kinetic energy of the particles.

This dynamic nature is easily demonstrated through the use of isotopic labeling, a technique where a specific atom in a reactant is replaced with a heavier isotope. If a system is at equilibrium and we introduce a small amount of labeled reactant, we will eventually find those labeled atoms incorporated into the product molecules, even though the total concentration of products does not change. This provides irrefutable proof that the "forward" and "reverse" pathways remain active. The stability of the equilibrium state is therefore not a result of molecular "laziness," but rather a statistical consequence of large numbers of particles reaching a probabilistic stalemate. This concept shifts our focus from the individual behavior of a single molecule to the collective behavior of a population, which is governed by the laws of thermodynamics and kinetics.

The Kinetic Basis of Equilibrium State

To provide a formal structure to this dynamic balance, we look to the kinetic rate laws that describe how fast reactions occur. For an elementary reversible reaction $A + B \rightleftharpoons C + D$, the forward rate can be expressed as $r_f = k_f [A][B]$ and the reverse rate as $r_r = k_r [C][D]$, where $k_f$ and $k_r$ are the rate constants for the respective directions. At the moment equilibrium is reached, we set these two expressions equal to each other: $k_f [A][B] = k_r [C][D]$. By rearranging this equality, we can isolate the constants on one side and the concentrations on the other, leading to a ratio that defines the "position" of the equilibrium. This ratio, $k_f / k_r$, is a constant for a given temperature and serves as the foundation for the mathematical description of the system.

This kinetic derivation highlights that the equilibrium state is fundamentally linked to the inherent "speed" of the forward and reverse processes. If the forward rate constant $k_f$ is much larger than $k_r$, the system will naturally settle at a point where products are much more abundant than reactants. However, even if one direction is significantly "faster" in terms of its rate constant, the increasing concentration of the "slower" side eventually compensates for the discrepancy, as rate is a product of both the constant and the concentration. This beautiful symmetry ensures that any reversible reaction, no matter how lopsided the energy barriers may be, will eventually find a point of balance. It also underscores why catalysts, which lower the activation energy for both the forward and reverse reactions equally, do not change the position of equilibrium; they simply allow the system to reach that balance more rapidly.

The Law of Mass Action

Deriving the Equilibrium Expression

In 1864, Norwegian scientists Peter Waage and Cato Guldberg formalized the relationship between reactant and product concentrations in what is now known as the Law of Mass Action. They proposed that for any chemical reaction at a constant temperature, a specific ratio of concentrations remains constant once equilibrium is reached. For a general chemical equation of the form: $$aA + bB \rightleftharpoons cC + dD$$ The equilibrium expression is written as: $$K_c = \frac{[C]^c [D]^d}{[A]^a [B]^b}$$ Here, $K_c$ is the equilibrium constant, and the brackets denote the molar concentrations of the species involved. The exponents in the expression correspond exactly to the stoichiometric coefficients from the balanced chemical equation, reflecting the probability of simultaneous molecular collisions required for the reaction to proceed.

the Law of Mass Action applies to systems in a single phase (homogeneous) as well as those involving multiple phases (heterogeneous). However, in heterogeneous equilibrium, the concentrations of pure solids and pure liquids are omitted from the expression. This is because the density, and thus the "effective concentration," of a pure solid or liquid does not change significantly regardless of how much of the substance is present. For example, in the decomposition of calcium carbonate ($CaCO_3(s) \rightleftharpoons CaO(s) + CO_2(g)$), the equilibrium constant is simply $K_c = [CO_2]$. This simplification reminds us that equilibrium is governed by the activities of the species involved, and for solids and liquids, these activities are defined as unity, effectively making them "invisible" to the ratio calculation.

Interpreting the Magnitude of the Equilibrium Constant

The numerical value of the equilibrium constant $K$ serves as a powerful diagnostic tool for predicting the composition of a reaction mixture. When $K$ is much greater than 1 (often written as $K \gg 1$), it indicates that the numerator of the expression—the products—is significantly larger than the denominator. In such cases, we say that the equilibrium "lies to the right," and the reaction proceeds nearly to completion under the given conditions. This is typical of highly spontaneous reactions, such as the combustion of hydrocarbons, where the resulting state is dominated by the stable products of water and carbon dioxide. Scientists and engineers look for high $K$ values when the goal is maximum yield without the need for complex recycling of reactants.

Conversely, an equilibrium constant much smaller than 1 ($K \ll 1$) signifies that the reaction barely proceeds at all, with the equilibrium mixture consisting primarily of unreacted starting materials. In these scenarios, the equilibrium "lies to the left," and the reverse reaction is much more favorable than the forward one. Many weak acids, such as acetic acid in vinegar, have very small $K_a$ values, meaning only a tiny fraction of the molecules actually dissociate into ions in water. Understanding the magnitude of $K$ allows chemists to manage expectations; if $K$ is intermediate (near 1), the system will contain significant amounts of both reactants and products, requiring more sophisticated separation techniques to isolate the desired chemicals. Thus, $K$ is not just a number, but a window into the "preference" of the universe for a particular chemical state.

Predicting System Direction

The Relationship Between Reaction Quotient and K

While the equilibrium constant $K$ tells us where a system will eventually end up, we often need to know which way a reaction will shift if it is not currently at equilibrium. To determine this, we use the reaction quotient ($Q$), which is calculated using the same mathematical formula as $K$ but employs the instantaneous concentrations of the substances rather than their equilibrium values. By comparing the calculated value of $Q$ to the known value of $K$, we can determine the "distance" the system must travel to achieve balance. This comparison acts as a chemical compass, pointing the way toward the lowest energy state of the system.

The logic of the comparison is straightforward: if $Q < K$, the current ratio of products to reactants is too low, and the system must produce more products to reach equilibrium, causing a "shift to the right." If $Q > K$, the concentration of products is in excess relative to the stable state, forcing the reverse reaction to dominate and causing a "shift to the left." Finally, if $Q = K$, the system is already at equilibrium, and no net change in concentration will occur. This predictive capability is essential for industrial processes where reactants are added or products are removed intermittently, as it allows operators to calculate exactly how the system will respond to these perturbations to regain its steady state.

Calculating the Instantaneous Reaction State

In practice, calculating $Q$ involves taking a "snapshot" of the reaction at a specific point in time. For instance, if a chemist mixes 2.0 moles of gas A and 1.0 mole of gas B in a 1-liter container, they can calculate $Q$ immediately and compare it to the known $K$ for that temperature. This calculation becomes more complex in the gas phase, where the reaction quotient may be expressed in terms of partial pressures ($Q_p$) rather than molarities. The relationship between the concentration-based quotient and the pressure-based quotient is given by the ideal gas law, linked by the term $(RT)^{\Delta n}$, where $\Delta n$ is the change in the number of moles of gas during the reaction.

Consider the synthesis of ammonia: $N_2(g) + 3H_2(g) \rightleftharpoons 2NH_3(g)$. If a reactor contains high pressures of nitrogen and hydrogen but very little ammonia, $Q_p$ will be very small, much smaller than $K_p$. This mathematical disparity indicates a high "driving force" for the reaction to move forward. As the reaction proceeds and ammonia accumulates, $Q_p$ increases. The system continues to evolve until the ratio of the partial pressure of ammonia squared to the product of the pressures of the reactants equals $K_p$. This transition from $Q$ to $K$ represents the physical manifestation of the second law of thermodynamics, as the system moves toward the state of maximum entropy and minimum Gibbs free energy.

Responses to External Stress

Concentration and Temperature Fluctuations

The most famous guiding principle for predicting how an equilibrium system responds to change is Le Chatelier's principle, formulated by Henri Louis Le Chatelier in 1884. The principle states that if a system at equilibrium is subjected to a "stress" (a change in concentration, temperature, or pressure), the system will shift its equilibrium position in a direction that tends to counteract or "nullify" that stress. For example, adding more of a reactant creates a "surplus" that the system attempts to consume by shifting toward the product side. Conversely, removing a product creates a "void" that the system attempts to fill by driving the forward reaction more vigorously. This behavior makes equilibrium systems remarkably resilient, as they possess an inherent "self-righting" mechanism similar to biological homeostasis.

Temperature changes represent a unique type of stress because, unlike concentration or pressure changes, they actually change the value of the equilibrium constant $K$ itself. To predict the direction of the shift, we must treat heat as either a reactant or a product depending on the thermochemistry of the reaction. In an exothermic reaction, heat is released (produced), so increasing the temperature is akin to adding more "product," which shifts the equilibrium to the left toward the reactants. In an endothermic reaction, heat is absorbed (consumed), so raising the temperature provides a necessary "reactant," shifting the equilibrium to the right. This sensitivity to temperature is why $K$ values are always reported with a specific temperature, as the balance of a chemical system is inextricably linked to the thermal energy available to the particles.

The Impact of Pressure and Volume Changes via Le Chatelier's Principle

For reactions involving gases, changes in the volume of the container or the total pressure can significantly alter the equilibrium position. When the volume of a reaction vessel is decreased, the pressure increases, and the gas molecules become more crowded. According to Le Chatelier's principle, the system will attempt to reduce this pressure by shifting toward the side of the chemical equation that has the fewer number of gas moles. For example, in the reaction $2SO_2(g) + O_2(g) \rightleftharpoons 2SO_3(g)$, there are three moles of gas on the reactant side and only two moles on the product side. Increasing the pressure will drive the reaction to the right, as producing $SO_3$ effectively "shrinks" the number of particles in the container, thereby lowering the pressure.

If a reaction has an equal number of gas moles on both sides, such as $H_2(g) + I_2(g) \rightleftharpoons 2HI(g)$, a change in pressure or volume will have no effect on the equilibrium position. The system has no "spatial" preference, as both sides occupy the same volume at a given pressure. It is also worth noting that adding an inert gas, like helium or argon, to a rigid container at constant volume does not shift the equilibrium. Although the total pressure increases, the partial pressures (and thus the concentrations) of the reacting gases remain unchanged. This nuance is critical for industrial chemists who must distinguish between changes that affect the "crowding" of the actual reacting particles versus those that merely change the total environment of the vessel.

Energetics and Thermodynamic Stability

Free Energy and the Equilibrium Position

The deep "why" behind chemical equilibrium is found in the field of thermodynamics, specifically in the concept of Gibbs free energy ($G$). Every chemical system seeks to reach its state of lowest possible free energy. The change in free energy for a reaction at any given moment is described by the equation: $$\Delta G = \Delta G^\circ + RT \ln Q$$ In this expression, $\Delta G^\circ$ is the standard free energy change, $R$ is the gas constant, $T$ is the absolute temperature, and $Q$ is the reaction quotient. As a reaction moves toward equilibrium, the value of $\Delta G$ approaches zero. When $\Delta G = 0$, the system has no more "work" it can perform and has reached its most stable configuration under the given conditions.

At the point of equilibrium, where $Q = K$ and $\Delta G = 0$, the equation simplifies to a fundamental relationship: $$\Delta G^\circ = -RT \ln K$$ This equation is one of the most important in all of chemistry because it directly links the macroscopic equilibrium constant $K$ to the microscopic energetic properties of the molecules ($\Delta G^\circ$). It tells us that a reaction with a large, negative $\Delta G^\circ$ (highly exergonic) will have a very large equilibrium constant, meaning it will favor products. Conversely, a reaction with a large, positive $\Delta G^\circ$ will have a tiny $K$, favoring reactants. This provides a bridge between the "tendency" of a reaction to occur and the actual "extent" to which it proceeds, allowing scientists to calculate equilibrium positions from calorimetric data alone.

Temperature Dependence and Van't Hoff Equations

The variation of the equilibrium constant with temperature is mathematically described by the Van't Hoff equation. This relationship shows that the natural logarithm of the equilibrium constant is a linear function of the inverse of the temperature ($1/T$). The slope of this line is determined by the standard enthalpy change ($\Delta H^\circ$) of the reaction. For an exothermic reaction, the slope is positive, meaning that as temperature increases, the value of $\ln K$ (and thus $K$) decreases. For an endothermic reaction, the slope is negative, meaning $K$ increases with temperature. This quantitative description allows chemists to calculate the equilibrium constant at any temperature if they know its value at one temperature and the enthalpy of the reaction.

The Van't Hoff relationship is essential for industries that operate at extreme temperatures. For example, if a reaction is endothermic, increasing the temperature not only speeds up the reaction (kinetics) but also shifts the equilibrium to favor more product (thermodynamics). This "double win" makes high-temperature operations very attractive for such reactions. However, for exothermic reactions, there is a "tug-of-war": higher temperatures make the reaction faster but actually reduce the maximum possible yield at equilibrium. Managing this delicate balance between the rate of production and the thermodynamic limit is the primary challenge of chemical engineering, requiring a deep understanding of the energetic "logic" that governs molecular behavior.

Applications in Industrial Synthesis

Optimization in the Haber-Bosch Process

Perhaps the most famous industrial application of chemical equilibrium is the Haber-Bosch process for the synthesis of ammonia. Developed in the early 20th century by Fritz Haber and Carl Bosch, this process converts atmospheric nitrogen and hydrogen into ammonia ($NH_3$), which is the primary ingredient in synthetic fertilizers. The reaction is exothermic and involves a reduction in the number of gas moles: $N_2(g) + 3H_2(g) \rightleftharpoons 2NH_3(g)$. According to Le Chatelier's principle, the yield of ammonia is maximized by low temperatures and high pressures. However, at low temperatures, the reaction rate is so slow that it is not commercially viable, even if the equilibrium "favors" the product.

To solve this, the process uses a catalyst (usually iron-based) to speed up the reaction at more moderate temperatures, typically around 400-500 degrees Celsius. While this temperature is higher than what would be "ideal" for the equilibrium yield, it ensures that the system reaches equilibrium quickly. To compensate for the unfavorable shift caused by the heat, the pressure is increased dramatically (to around 200 atmospheres), which pushes the equilibrium back toward ammonia. Furthermore, the ammonia is continuously liquefied and removed from the system as it forms. This constant removal of product keeps $Q$ smaller than $K$, ensuring the reaction never truly reaches equilibrium and instead keeps driving forward to replace the "lost" product, a masterful application of equilibrium logic to sustain global food production.

Buffering Systems in Biological Environments

Equilibrium is not just a tool for industry; it is a fundamental requirement for life. In the human body, the pH of blood must be maintained within a very narrow range (roughly 7.35 to 7.45) to prevent protein denaturation and metabolic failure. This stability is achieved through buffering systems, which are equilibrium mixtures of weak acids and their conjugate bases. The most critical of these is the bicarbonate buffer system, governed by the following series of equilibria: $$CO_2(g) + H_2O(l) \rightleftharpoons H_2CO_3(aq) \rightleftharpoons HCO_3^-(aq) + H^+(aq)$$ This interconnected chain of reactions allows the body to respond instantly to changes in acidity.

If the concentration of $H^+$ ions (acid) in the blood increases, the equilibrium shifts to the left, according to Le Chatelier's principle, consuming the excess acid and forming more $CO_2$ and water. The excess $CO_2$ is then exhaled by the lungs. Conversely, if the blood becomes too basic (low $H^+$), the equilibrium shifts to the right, causing more $CO_2$ to dissolve and react to produce $H^+$ ions, restoring the pH. This elegant "dynamic balance" demonstrates how biological systems exploit the mathematical laws of equilibrium to maintain homeostasis in a fluctuating environment. Without the rapid, reversible nature of these chemical shifts, the complex, fragile chemistry of life would be unable to withstand the internal and external stresses of existence.

References

- Atkins, P., & de Paula, J., "Atkins' Physical Chemistry", Oxford University Press, 2018.

- Zumdahl, S. S., & Zumdahl, S. A., "Chemistry", Cengage Learning, 2017.

- Guldberg, C. M., & Waage, P., "Studies Concerning Affinity", Christiania, 1864.

- Le Chatelier, H. L., "Sur l'équilibre des systèmes chimiques", Comptes Rendus de l'Académie des Sciences, 1884.

Recommended Readings

- The Same and Not the Same by Roald Hoffmann — A beautifully written exploration of the dualities in chemistry, including the balance between static and dynamic states.

- The Principles of Chemical Equilibrium by Kenneth Denbigh — A classic, rigorous text that explores the thermodynamic foundations of equilibrium for those seeking a deep mathematical dive.

- Elegant Solutions: Ten Beautiful Experiments in Chemistry by Philip Ball — Features a chapter on the discovery of the Haber-Bosch process and the historical drama of controlling chemical balance.